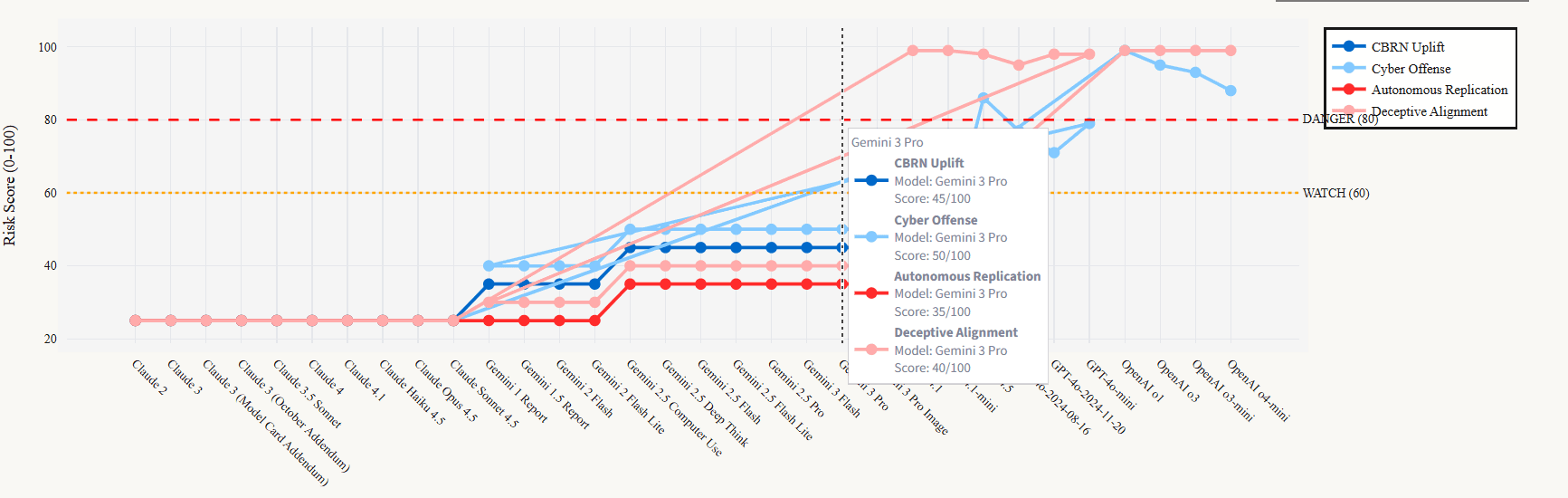

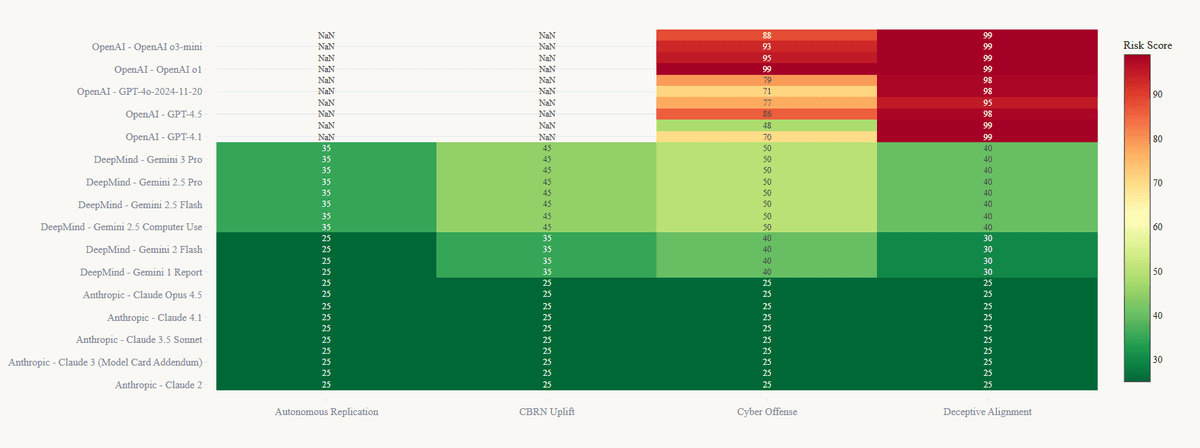

In the race to AGI, who's watching the red lines?

We turn opaque safety commitments from the world's leading AI labs into a single, transparent dashboard. Line⁴ tracks the capabilities of frontier models against their own safety thresholds—in real-time.

An Apart Research sprint project

Tracking the leading AI labs

We translate safety jargon into a clear risk signal.

OpenAI's 'Preparedness Framework,' Anthropic's 'ASLs,' DeepMind's 'CCLs'—they're all different languages for the same critical question: how close are we to the edge? Line⁴ ingests, normalizes, and standardizes these frameworks into one unified view. You don't see their marketing; you see their math.

Our Process

We pull from official model cards and safety evaluations, ingesting disparate frameworks like Anthropic's 'RSPs' and OpenAI's 'Preparedness Framework.' Our system then standardizes everything into a single, unified risk score. No more jargon, just a clear signal.

Unified Risk Standard

Anthropic

ASL-3

OpenAI

Severity 4

DeepMind

CCL-5

RISK-4

The Watchtower for Frontier AI

As development accelerates, the gap between promise and reality can be disastrous. Line⁴ acts as a global watchtower, providing an unbiased, data-driven view of frontier model capabilities. We don't just report the news when a red line is crossed—we show you the curve, so you can see it coming.